Principles of Evidence-Based Practice

Evidence-based practice, also known as evidence-based medicine and evidence-based health care, involves integrating the best research evidence with clinical expertise and patients' circumstances and values (Sackett et al., 1996). This definition highlights the importance of utilizing research evidence with clinical expertise and patient characteristics and preferences, accounting for the notion that evidence cannot necessarily be applied generally to all patients, often requiring individualization.

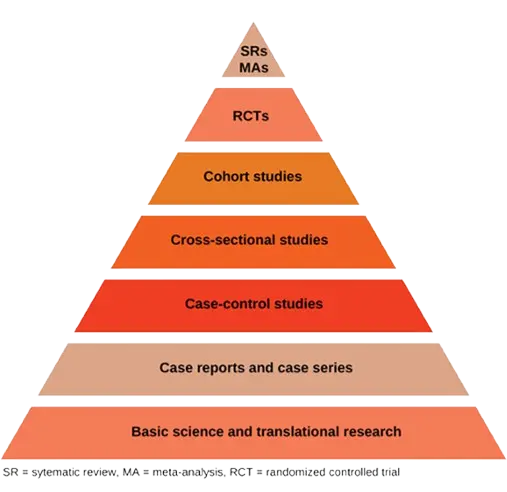

The research side of evidence-based practice focuses on the development of the identification and synthesis of the highest quality evidence. There is a general consensus on what constitutes high quality evidence, although there are some exceptions. This hierarchy is typically conceptualized as an 'evidence pyramid' where the highest level of evidence is at the top of the pyramid, with the validity of the evidence decreasing at lower levels, although sometimes research at a lower level of the pyramid will be more appropriate to answer a specific research question (e.g. the design is most appropriate or the quality is better than studies that are higher on the pyramid). The best choice of study may also vary based on clinical context. For example, a study done in a certain population may be more applicable to an individual from that population than a meta-analysis that did not stratify between populations.

Evidence Sources

Evidence Pyramid

Types of studies and their respective strengths and weaknesses are summarized below (also reviewed in detail in (Wallace et al., 2022)).

Systematic Reviews & Meta-Analyses

When conducted in a rigorous manner, systematic reviews and meta-analysis are generally considered to be the highest quality study designs. Systematic reviews synthesize available evidence evaluated from multiple distinct studies and often include meta-analyses, which combine numerical outcomes as if they were the result of a single larger study. These methodological approaches require a rigorous literature search of published and unpublished literature, appraisal of each relevant article for bias, and a quality assessment of the level of evidence for each outcome.

Systematic reviews and meta-analyses make readers aware of the current state of the evidence and gaps where further studies are needed. However, they do have limitations. Inclusion of studies with biased results can result in an inaccurate qualitative and/or quantitative synthesis ("garbage in → garbage out") and when heterogeneous studies are synthesized quantitatively, taking the results at face value can lead to biased conclusions. It is common for meta-analyses conducted by different research groups to draw different conclusions based on study inclusion criteria.

Randomized Controlled Trials (RCTs)

RCTs are prospective studies that evaluate the effectiveness of an intervention through comparison with placebo or the existing standard of care. They provide higher internal validity than can typically be achieved with observational methods, and RCTs are the only type of study definitively able to establish causation rather than correlation or association. Randomization equalizes the distribution of confounding factors in each group, while allocation concealment reduces selection bias and blinding can help to address observation bias. RCTs may have limited generalizability, particularly if they have numerous/restrictive exclusion criteria or low consent rates. Additionally, attrition bias can occur if loss to follow-up or drop-out rates are unbalanced between arms.

Cohort studies

Cohort studies identify a specific population in which a subset of individuals has experienced a particular exposure and compare the rates of disease development in exposed to unexposed individuals over time; they can be either retrospective or prospective. Cohort studies are a good design when the outcome of interest is common, and they also have the benefit of being able to measure multiple outcomes. Although observational studies cannot confirm causation, evaluating the time course of exposures and outcomes permits generation of hypotheses and can build evidence towards causality. Cohort studies are prone to confounding bias due to their observational design. Even when measured confounders are appropriately addressed statistically, not all confounders are measured or appreciated and may therefore be unaccounted for. Cohort studies may also suffer from attrition bias due to loss to follow-up, as well as surveillance bias, because certain diseases may be more likely to be detected in study participants than in the general population due to increased monitoring.

Cross-sectional studies

In cross-sectional studies: population data are collected at a specific point in time with simultaneous measurement of the exposure and outcome. These studies are often used to evaluate diagnostic accuracy of a test or measure. They cannot ascertain or suggest causality as the exposure and outcome occur at a single point in time.

Case-control studies

Case-control studies compare groups of individuals with and without a specific disease with the aim of identifying disease risk factors. They are useful for studying rare diseases which would otherwise require very large cohorts to evaluate. Selection bias can occur in case-control studies if the control group is selected from a different population than cases, and recall bias may occur if cases are more likely to remember an exposure than controls or vice versa.

Case reports and case series

These studies provide a description of an individual clinical case or a series of related cases. They offer an opportunity for clinicians to share their experience caring for patients with unusual presentations or rare diseases. However, drawing conclusions from these study types to answer clinical questions is not advised, due to potential biases resulting from the small number of patients described and the lack of comparison groups

Basic science and translational research

Basic science and translational studies, including in vitro and animal studies, often serve as the foundation for clinical research. This type of research is typically used to drive the formulation of mechanistic hypotheses and ascertain the safety of drugs and devices before further study in humans. However, the results of these studies are not appropriate for clinical decision-making.

Clinical Decision-Making Pyramid (6S Pyramid)

A second pyramid, aimed at clinical decision-makers, has also been proposed (DiCenso et al., 2009). This '6S Pyramid' leverages resources that are available to facilitate rapid synthesis of high quality research.

As shown below, the bottom tiers of the 6S Pyramid pyramid incorporate the study designs shown in the Evidence Pyramid and, above those, the systematic reviews and meta-analyses (labeled 'Syntheses'). The higher tiers of evidence include research that has been further aggregated and summarized by experts.

.png)

Components of the 6S Pyramid are summarized below.

Systems

Systems (also known as Clinical Decision Support Systems) involve the integration of information from the lower levels of the evidence hierarchy with individual patient records. These are felt to represent the ideal source of evidence for clinical decision-making as they provide guidance that is both evidence-based and personalized.

Summaries

These are regularly updated clinical guidelines or textbooks that integrate evidence-based information about specific clinical problems (e.g. Canadian Guidelines, UpToDate).

Synopses of syntheses

Synopses of syntheses summarize information found in systematic reviews (e.g. Health and Technology Assessment Database, Cochrane Summaries of Cochrane systematic reviews).

Syntheses

Syntheses include systematic reviews and meta-analyses (e.g. Cochrane Library, PubMed Health).

Synopses of single studies

These resources provide summaries of evidence from individual high-quality studies (e.g. Tools for Practice and Evidence-based medicine).

Single studies

Described in detail above, these are unique research studies conducted to answer specific clinical questions. Single studies (as well as systematic reviews and meta-analyses) can be identified through searching databases such as OVID, PubMed, Web of Science, and Scopus.

Other types of evidence

Grey literature

This includes any literature that has not been published through traditional means. Grey literature includes conference abstracts, pre-prints, white papers, and sometimes, lay media sources. It is often excluded from large databases of traditionally published literature and requires specific search strategies to identify.

Assessment of quality of evidence using GRADE

The Grading of Recommendations, Assessment, Development, and Evaluation (GRADE) tool was established for assessing the certainty of evidence for interventions (Prasad, 2024). There are different versions of the tool for prognostic factors (Foroutan et al., 2020) and diagnostic tests (Schünemann et al., 2019).

Although the quality of evidence is a continuum, the GRADE approach results in classification of the certainty of a body of evidence into one of the following four grades:

- High: evidence is robust enough to draw a conclusion; we are very confident that the true effect lies close to that of the estimate of the effect

- Moderate: evidence supports a conclusion but further research may impact confidence in the estimate; the true effect is likely to be close to the estimate of the effect but there is a possibility that it is substantially different

- Low: additional research is likely to have an important impact on the confidence in the estimate; our confidence in the evidence is limited and the true effect may be substantially different from the estimate of the effect

- Very low: available evidence is insufficient to support any firm conclusions; we have very little confidence in the effect estimate and the true effect is likely to be substantially different

The GRADE handbook notes that the 'low' and 'very low' categories can be combined where appropriate.

The following steps are used to assess strength of evidence:

- Assess risk of bias (ideal study design, adjusted and unadjusted analyses, results may influence risk of bias judgements)

- Inconsistency (degree of variation in results across different studies)

- Imprecision (degree of uncertainty around effect estimates) - if CIs fall across the 'minimally clinically important difference' then ?)

- Indirectness (extent to which available evidence directly addresses the clinical question of interest)

- Publication bias

NiaHealth uses aspects of the GRADE approach to determine the certainty of the evidence, combining the 'low' and 'very low' categories into a single category of 'low certainty'.

Our research standards & process

At NiaHealth, we do not make decisions first and look for evidence later. The entire process — from which tests we offer, to how we interpret results, to the recommendations we make — is grounded in clinical evidence from the ground up. Our research team is continually reviewing the literature to make sure the information we provide reflects current medical evidence. And frankly, we don’t think “trust us” should be the standard here. We think you should be able to see the process for yourself. Learn more here.